In my day-to-day doing development at Firstborn, it’s pretty common that we run into questions that don’t have a very clear answer – for example, what would be the best technique to do something (in terms of performance).

When something like that happens, depending on the time constraints, we may either do some quick test, or just go with what we assume is best – creating a theory and sticking with it. At times, however, a certain issue becomes so important it requires a more extensive research – having the scientific method applied to it, so to speak.

I’ve ran into one of those recently with a website we’ve just created – Expedition Titanic. In that website, we have a few videos running in quasi-fullscreen (taking the whole website space, that is) and we were having trouble with performance in some computers – dropping frames, not playing smoothly, etc.

In previous projects, we had some assumptions of how full-site videos should be encoded – resolution, bitrate, codec, etc. Most of those were based on actual experience, but it was scattered information without any real data to back it up.

Video playback performance was becoming more of an issue in every website I’ve been working on – this is also true of the previous website I’ve developed here, for the 5 REACT campaign – and I decided to stop relying on myths, personal theories or word-of-mouth and do some real tests – rather, benchmarks – to actually come up with the best solutions for video playback in Flash in terms of performance.

My question was not about the video quality itself, but rather its playback quality – how smooth the video frames were being played, how many frames were being dropped; in sum, what was the impact of different encoding methods on playback performance. We generally assume F4V (H.264) videos have better quality, but which one requires more from the CPU to decode? What’s the impact of actual resolution and bitrate?

I have to confess I had some personal beliefs I wanted to put to the test with these benchmarks. My theories were as such:

- On2 VP6, (FLV) videos are faster to decode than H.264 (F4V) videos.

- H.264 decoding is faster on multi-core machines; On2 VP6, not as much.

- Overall, OS X has even worse decoding performance for H.264 videos.

- Videos with higher bitrates are slower to decode and this impacts the rendering speed of high-quality videos.

- Video playback performance in Linux is horrible.

Still, I approached the problem with a neutral stance and would be as happy proving as I would be with disproving any of those theories. The main reason why I wanted to do the test in the first place is because I wasn’t really sure any of them were really true, but I still used them as guidelines when creating content.

Measuring video playback quality

My initial plan to measure the playback quality was using the (Flash 10 only) droppedFrames property of the NetStreamInfo object and the (undocumented) decodedFrames property of the NetStream object. That way, I hopped to be able to measure the number of skipped frames and measure the time spent decoding in a more rendering-agnostic fashion.

After some initial tests, however, I decided against it because the values I hot were just not reliable enough: while the behavior of both properties was predictable for FLVs (the sum of decodedFrames and droppedFrames would be the number of total frames in the video), their values while playing an F4V video were quite undecipherable; there seemed to be a correlation between playback quality and the value of both properties, but their actual relationship is a mistery – for example, does droppedFrames contain the number of frames dropped during decoding, or during decodoing and playback? Does decodedFrames contain the number of frames decoded, or decoded and rendered? The numbers state different things at different times, giving that either property can have a higher value than the other, and the fact that they seem to behave differently depending on the choice of video format.

Due to this, I’ve decided to try and have a 30fps video running inside a 60fps SWF, and use the final SWF speed (measured by onEnterFrame events) indicating how much time was being spent with video decoding and rendering. Nevertheless, the number of frames decoded and dropped are still included in the final results.

Test rationale

The idea of the test was finding out the best combination of encoding variables for proper video playback, so I decided to use these parameters:

- Format: FLV (On2 VP6) and F4V (H.264)

- Bitrate: 500Kbps, 1000Kbps, 1500Kbps, 2000Kbps

- Resolution: 1920×1080, 960×540, 480×270

This gives a total of 24 different encoded files for the same video. They would all be rendered at the same size (taking the whole browser space); the idea is that it’d allow me to have a better understanding of how resolution, bitrate and format changes impacted the playback quality, with the same area being drawn.

The file used was a rendering of the intro to the Expedition Titanic’s “Explore” area, originally rendered at 1920×1080 and set at 30FPS.

The testing project was a small SWF file that would load a file, play it, unload it, and skip to the next file. The SWF was set at 60fps, using maximum quality; nothing else was present on the staging area (the idea was to test video decode speed without compositing tradeoffs), and the video was scaled to fit inside the full browser area (similar to the Stage scaleMode of StageScaleMode.NO_BORDER). The executing SWF was kept focused during the whole test.

I used On2 VP6 as the sole codec for the FLV videos. Sorenson Spark, while considered to have better decoding performance, is usually not a parameter on the video encoding decision equation anymore due to its sub-standard encoding quality.

For the FLV encoding, I used Adobe Flash Video Encoder (CS3) using a normal encoding (1-pass). For the F4V encoding, I used Adobe Media ENcoder (CS4) with a “High” profile, Level 4.1, and encoded with 2-pass VBR where both target and maximum bitrates where the bitrate being tested. And since the idea was testing raw playback quality, as opposed to accurate seeking ability or any other parameter, keyframe placement distance was kept as automatic on both.

The videos were encoded without an audio track.

Results

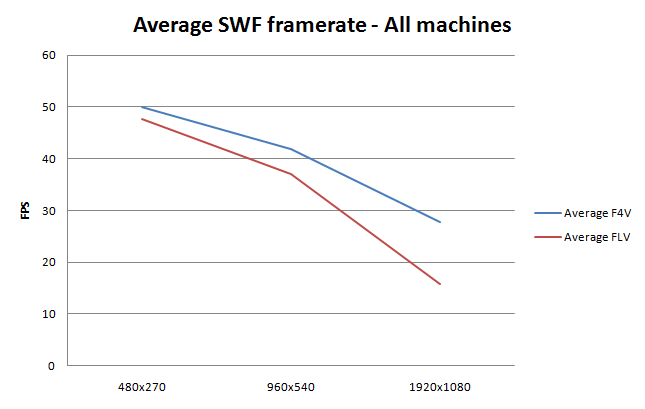

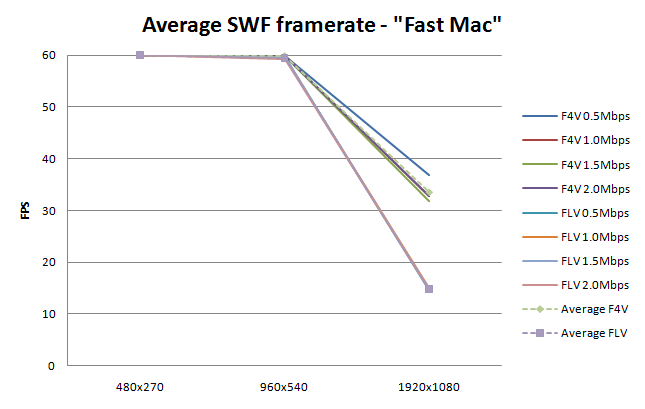

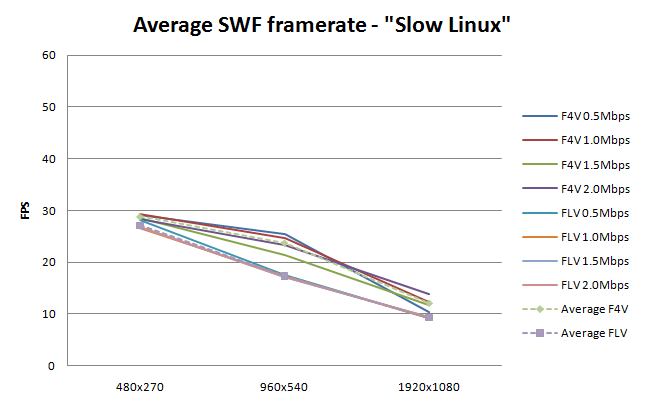

Here are the test results, plotted in line charts. The question these numbers try to answer is: when playing a 30FPS video on a 60FPS SWF, what’s the actual rendering framerate?

The test was ran on a number of different computers, from low-end to high-end, Windows PCs and Macs, multi-core and single-core machines. The idea was not measuring performance of each setup against each other – they had different specs, and were ran at different resolutions – but rather to see the differences between different videos being played on the same machine.

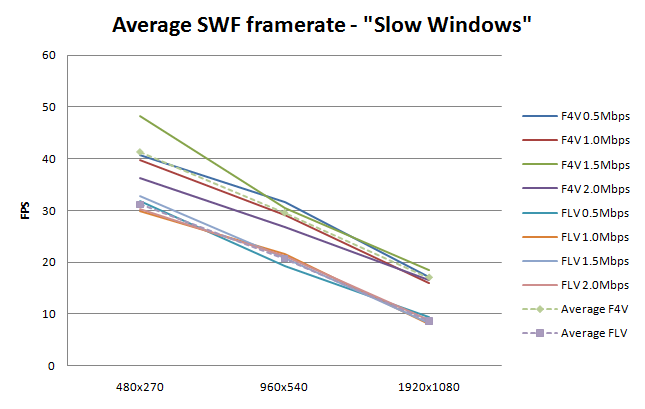

“Slow Windows” was an old Dell laptop running Windows XP SP2 at 2GHz (Intel single core) with 1gb memory. Tests used Firefox 3.6.8 and Flash Player 10.1.82.76.

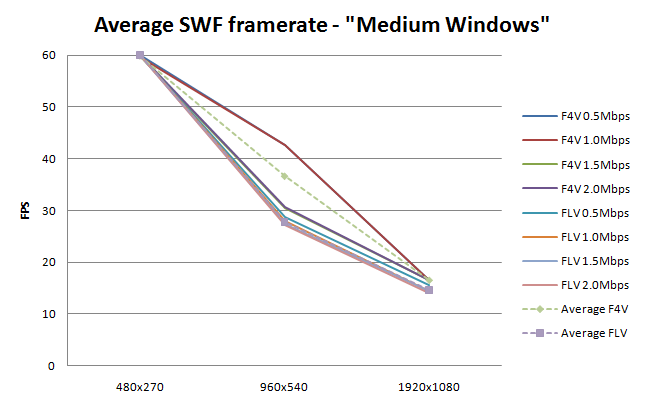

“Medium Windows” was a desktop running Windows XP SP3 at 3GHz (Intel single core) with 4gb memory. Tests used Firefox 3.6.8 and Flash Player 10.1.82.0.

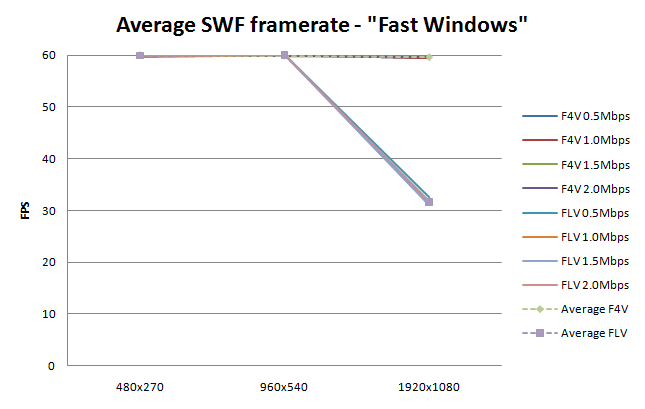

“Fast Windows” was a desktop machine running Windows 7 64bits at 3.33GHz (Intel Core 2 Duo, 2 cores) with 4gb memory. Tests used Chrome 6.0.472.51 (beta) and Flash Player 10.1.53.0.

“Fast Mac” was a 27″ iMac running OS X 10.6.4 at 3GHz (Intel Core 2 Duo, 2 cores) with 4gb memory. Tests used Safari 5.0.1 and Flash Player 10.1.53.64.

“Slow Linux” was a laptop running Ubuntu 10.04 64bits at 2GHz (AMD 3500+ single core) with 461MB memory. Tests used Firefox 3.6.8 and Flash Player 10.1.82.76.

Result analysis

Some points can be drawn from the results:

F4Vs have better playback quality

Simply put, H.264 decoding is faster than On2 VP6 decoding, so F4V videos easily give best results in terms of overall playback performance of a video on a Flash website . This holds true for both single-core and multi-core machines, and for every platform tested (Windows, Macintosh, Linux). On2 VP6 actually uses a new thread on multi-core systems for better performance, but apparently this is not enough to be faster than the (usually) hardware-accelerated H.264 decoding.

Adding to the best performance the (visibly) better quality of H.264 encoding, F4V becomes an obvious choice for video encoding in Flash. My recommendation is that F4V videos should always be used instead of FLV videos, except when a feature supported by FLVs is needed (transparency channel; legacy Flash versions support; cuepoints; odd video dimensions).

H.264 doesn’t have any special impact on OS X machines

F4V video performs just as well on a Macintosh, not being particularly taxed in relation to comparisons with any other system. Specially with the newly added hardware acceleration, there’s doesn’t seem to exist any valid concern with how the decoder performs in this platform.

Actual bitrate doesn’t have that much of an impact on performance

While technically not true – a higher bitrate/quality does mean a lot more data to process – the actual impact of video bitrate in overall performance was near negligible in my tests. In the case where one needs better performance for a video, it makes more sense to lower the resolution than to lower the bitrate.

Linux performance is not that bad

Simply put, my tests on a very low-end machine produced results that were easily watchable. They would drop considerably below the target framerate of 30fps, but considering the target machine, and a comparison to my low-end Windows machine, it does feel pretty good overall. Not fast, but not too terrible either.

Conclusion, or TL;DR

H.264 (F4V) videos are better performance-wise. Use them when possible.

Additional notes

You’ll find the full results (with data for every video tested) here. The actual benchmark is here if you want to test if yourself (not recommended at all, as it takes at least 31 minutes to complete, and downloads 240mb of videos – and you can only see the results once it’s done).

Zeh,

As always you are the perpetual scientist. Thanks for sharing this research.

Hi Zeh.

Thanks for the great benchmarking tests.

Usually when creating video for the web, I take the end-users connection speed to the internet in consideration when calculating the bitrate for the video, and from that I get an idea of what size the video could have to be as big as possible without getting ugly.

In the future I’ll be sure to encode my videos as .f4v instead of .flv, except the few times I actually encode my .flv’s with cuepoints.

If I run in to any circumstances that differ from your conclusion in this blog post, I’ll be sure to document it and let you know.

Thanks for sharing and being the coolest brazilian 🙂

Felix

Hi Zeh,

This is very interesting but i wonder if it also matters what method is being used to play the video, FLVPlayback, VideoPlayer or OSMF?

Tim

On a sidenote. When deciding the width x height for the video it´s “good practice” to keep it divideable by 16(size of the macroblocks), makes it “easier” to decode. Or at least that´s what I´ve learned. But maybe it´s not a big issue nowadays.

And what about mp4?

Zeh. Great test. Though would like to see the same tests performed with earlier versions of the flash player. Tried using H.264 on a project last year, but DID have problems with dropped frames, etc on slower windows machines…I’m assuming due to lack of hardware acceleration or?? My understanding is that H.264 DOES require more cpu overhead….I’m guessing your test results are mostly due to performance gains with the latest fp?

Also, what do you use to encode your H.264’s and what settings do you use?

@Philippe: In this context, MP4 is the same as F4V.

@Tim E: I don’t think it’d matter – the AS side is minimal, only streaming the NetStream and displaying it inside a Video instance. I used my own VideoContainer class but I believe it’d be the same with any other player. Maybe OSMF is different in terms of low-level implementation (haven’t used it yet), but considering the hard parts – decoding, rendering – are done by the Flash Player’s internals, I think any other player would give the same results.

@eco_bach: I’m pretty sure older versions of the video player would have much worse F4V support, specially if they don’t have hardware decoding available (I know it was only added on more recent versions, although I’m not sure which specific). But in a way, Flash 10.1 is already pretty widespread, and this test was trying to get a general guideline for future projects, so I think that focusing on what’s the latest version /now/ is the better option.

And true, I forgot to list the encoding settings – will add it now.

I’d also be very interested to see the full encoding settings used.

We do a lot of work with video, including ‘quasi-fullscreen’ (thanks for the new term) and have tried several encoding tools but somehow are experiencing dropped frames and lip-sync issues. Other videos from external sources similar in bitrate and resolution, also using H.264, seem to perform better under the same environment and player.

Thanks

@Mark: I’ve added the settings above under the ‘Test rationale’ block. In a nutshell, for F4V I basically left everything at default on Adobe Media Encoder (CS4), using 2-pass encoding and VBR; but granted, audio quality/sync was not a parameter on the test. Personally I haven’t experienced loss of sync with F4V, but I guess I haven’t had have to work with voice-intensive content recently.

Since Flash as a plugin will always scale to the available browser real estate, I’m wondering how useful your results are considering we don’t know the actual rendering size used in your tests. I know this isn’t actually all the easy to determine. For example, I’m running your test on Linux, with a 1400×1050 monitor. With Ubuntu, Firefox, etc. taking up some of the screen I have no idea what the actual rendering size is. Width is clearly 1050, since I’m running Firefox fullscreen and the video right and left edges are the edges of the monitor’s display. I wish the benchmark actually tested the plugin operating at the size requested by the video, although I guess that would mean a monitor taller than 1920×1080, right?

Chris: I considered the video size to be irrelevant because I wanted to compare decoding performance, not rendering performance; that way, as long as the video was always rendered at the same size under the same platform, I could focus on the *relative* differences in playing it. Saying that video A plays at 30fps while video B plays at 15fps means that the platform spends more time decoding video B, and since they were being rendered at the same size, the impact of time spent rendering would be equal.

Of course this also means that comparison /between platforms/ is not a point of the test as the numbers would be bogus – there’s no absolute measurement done.

The point of the test was determining what plays better between FLV and F4V, and as a side objective, whether video bitrate had much impact in performance – under different environments. Given that, I do believe the results were useful.

If anything, it’s pretty easy to repeat the tests with a fixed resolution – loading the page and changing the object width/height with firebug will do the trick. I have no reason to believe that the relative results would be any different, however.

this was very helpful, thank you.

Any suggestions on how to set up 4 concurrent videos in 5 frames (so 20 videos)? I have 4 FLVPlayback instances in 5 frames(as I understand, I must use a 1:1 setup as each are playing different videos and analyzing different cuepoints). Needless to say, this in incredibly slow, and gets slower and slower as I switch between frames.

thanks for any advice,

Cindy

thank’s zeh, thank’s a lot

The only things I would add (if you do any future testing) would be the impact of external vs. embedded video, and the impact of filtering choices like deblocking and video smoothing.

Really interesting, thanks for sharing!!